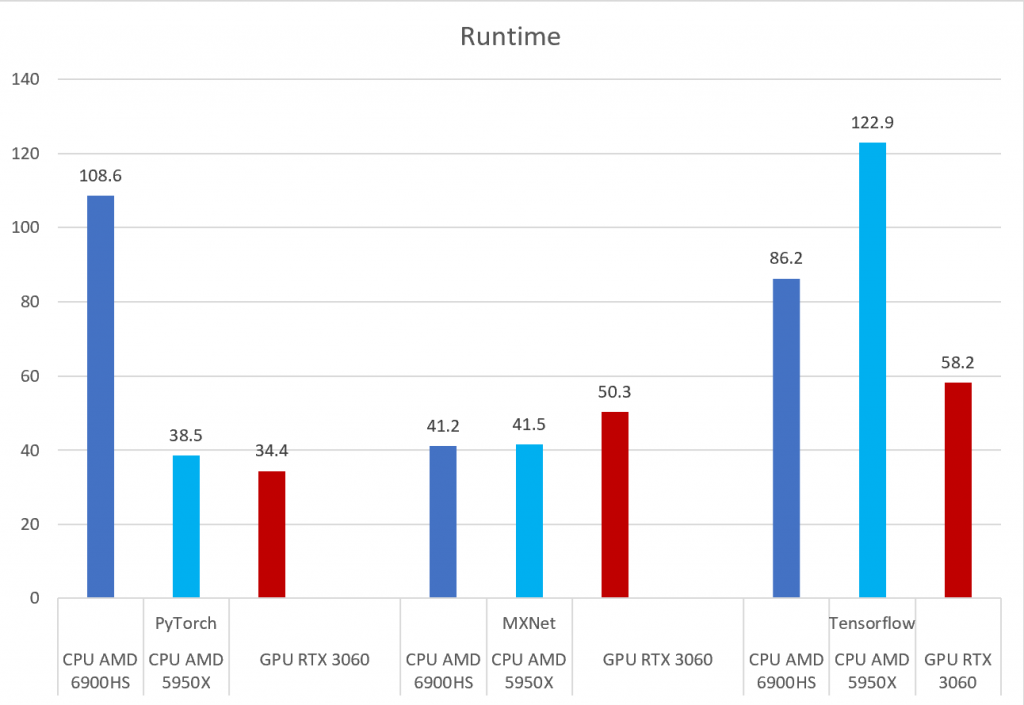

PyTorch, Tensorflow, and MXNet on GPU in the same environment and GPU vs CPU performance – Syllepsis

The NVIDIA GeForce RTX 3060 Ti posts strong performances in CUDA, OpenCL and Vulkan benchmarks - NotebookCheck.net News

Setting up PyTorch and TensorFlow on a Windows Machine | by Syed Nauyan Rashid | Red Buffer | Medium

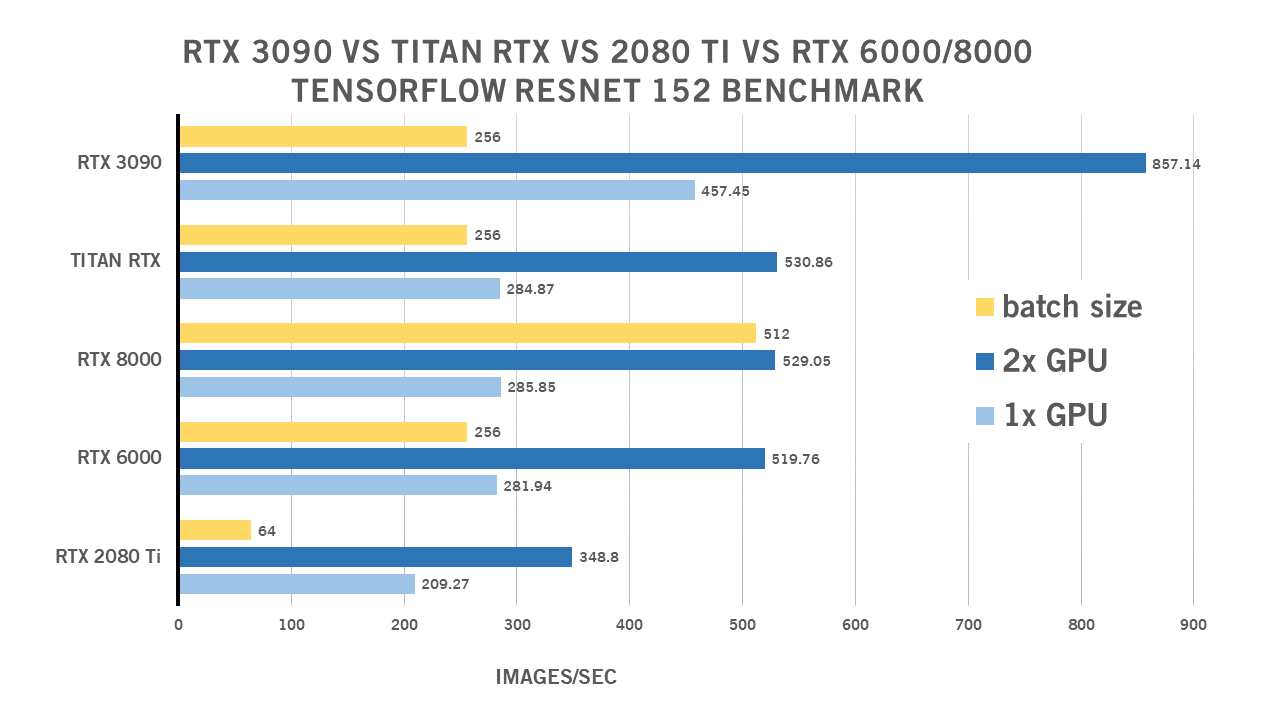

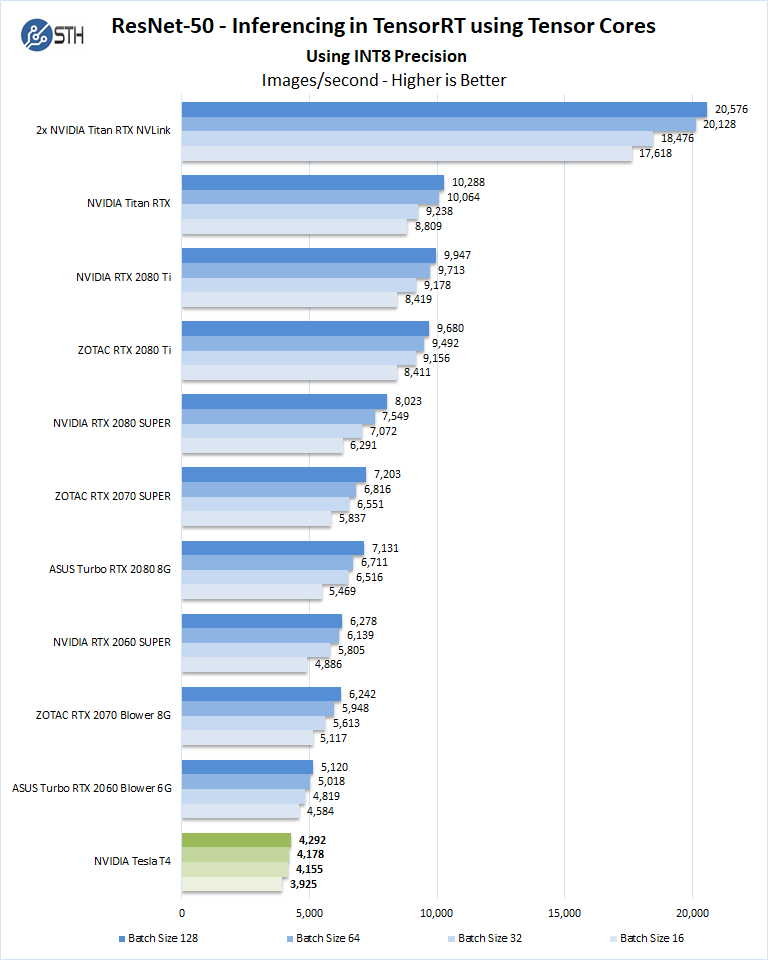

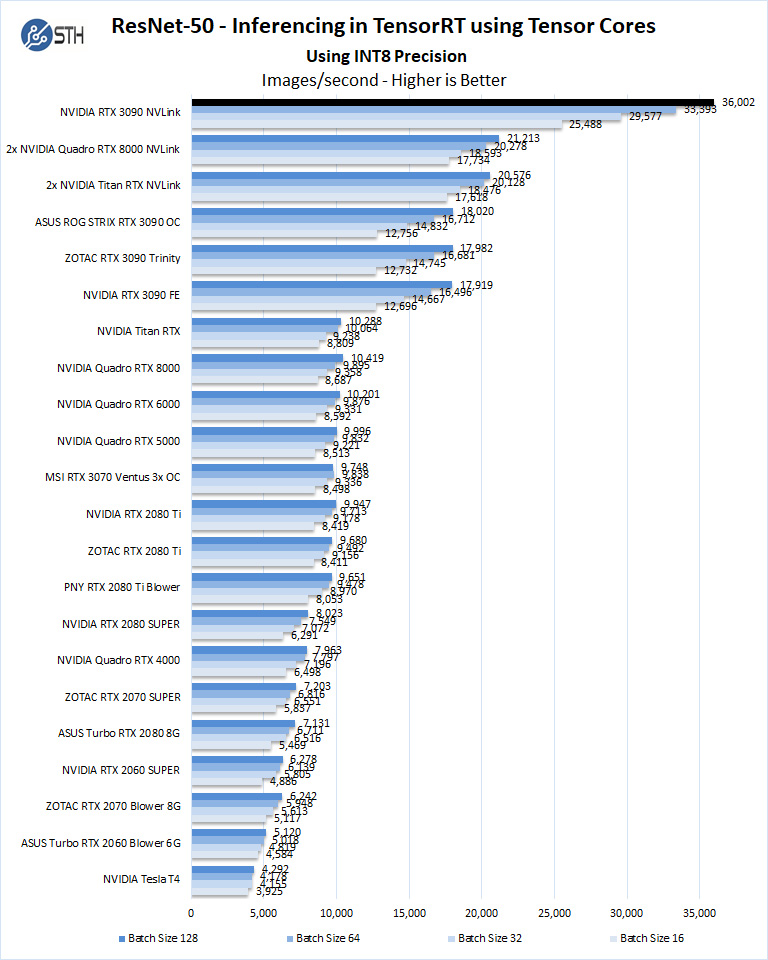

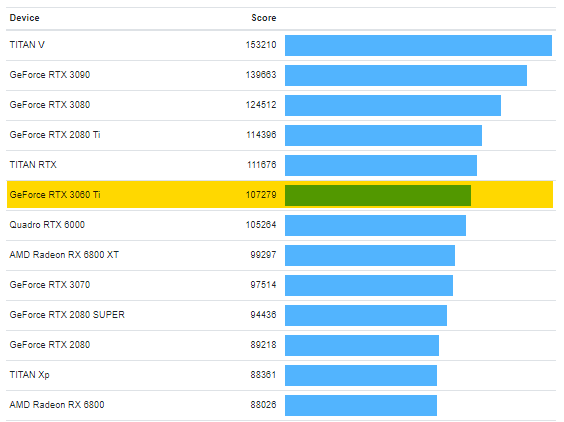

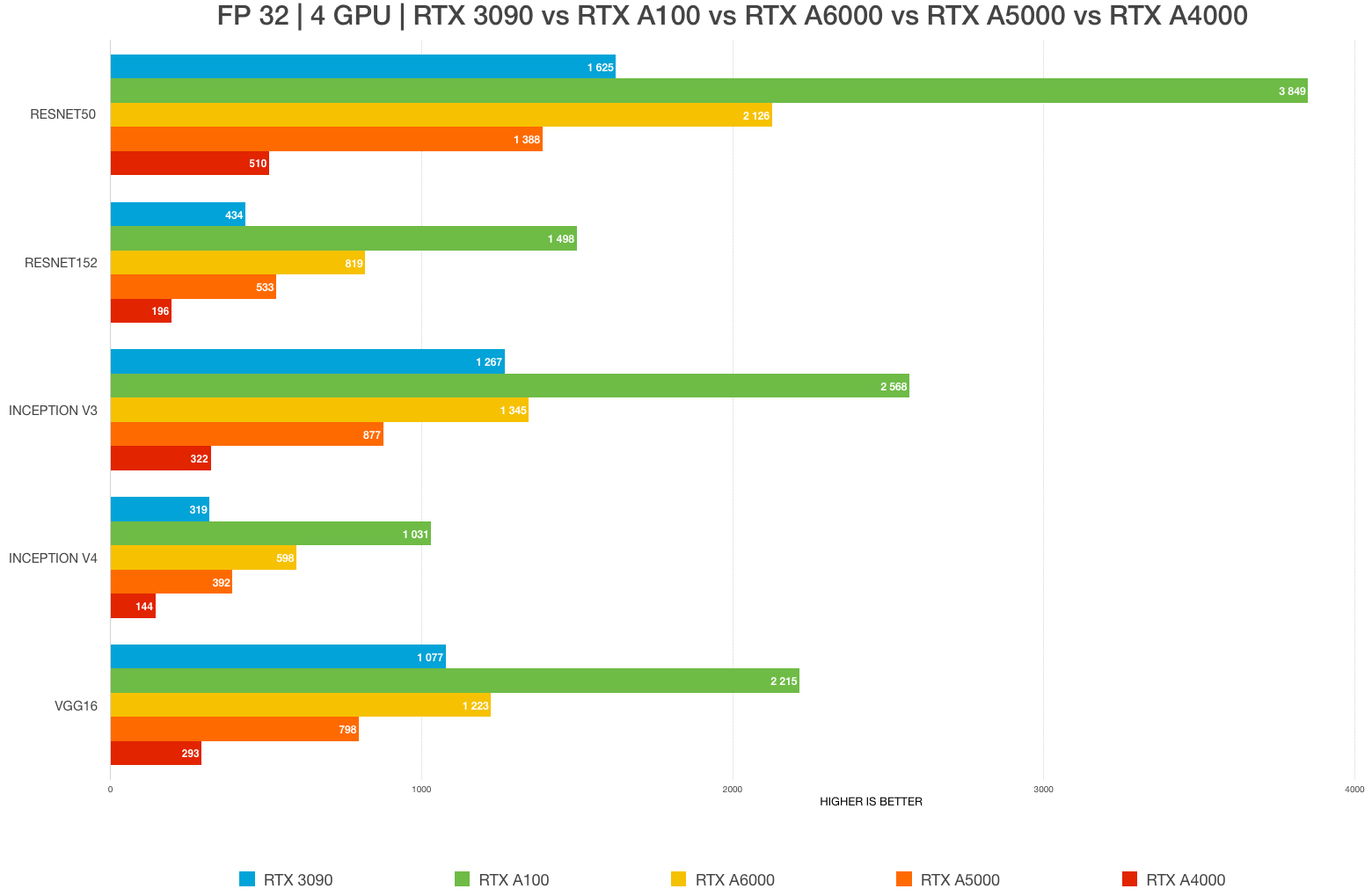

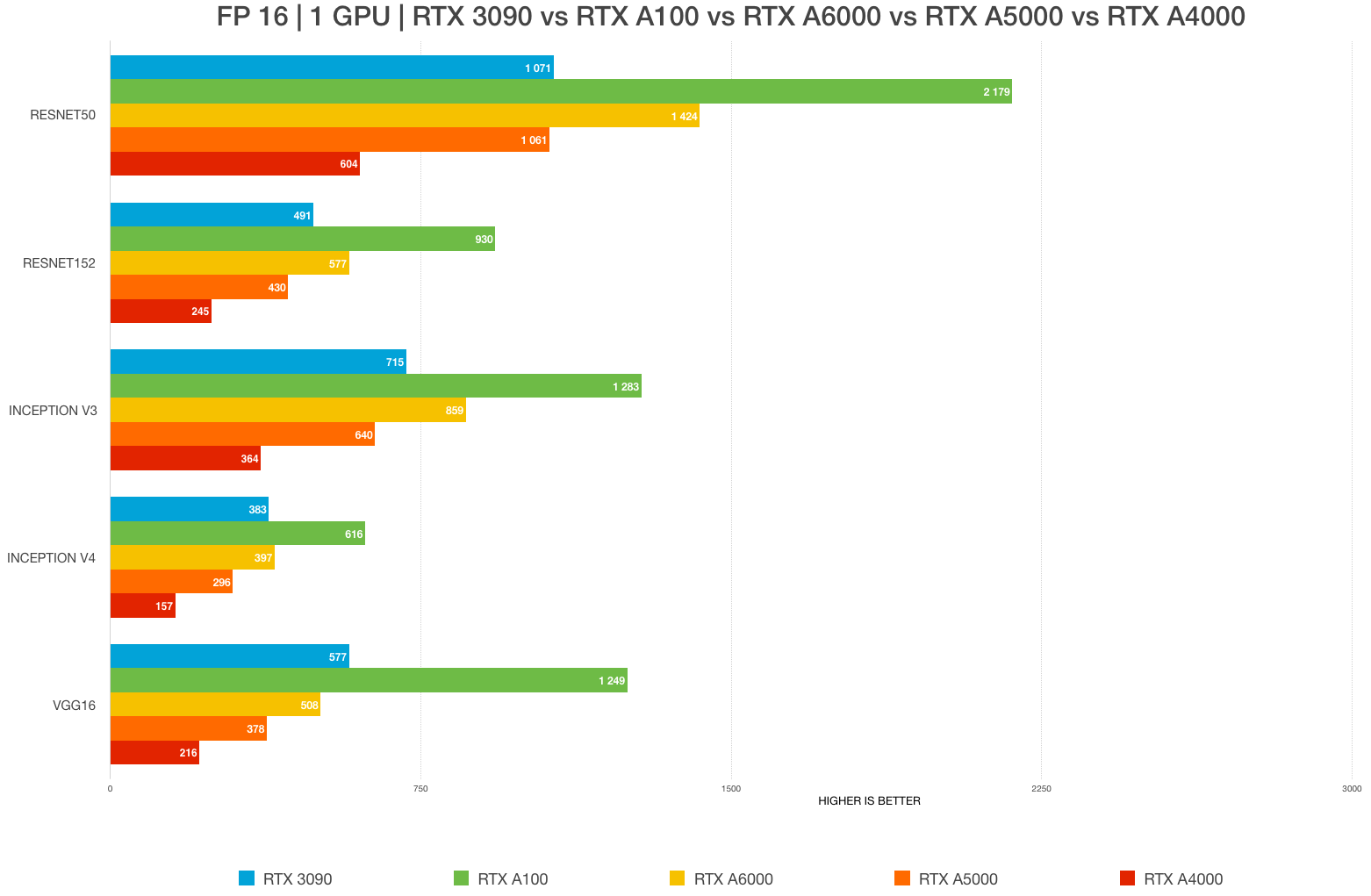

Best GPU for AI/ML, deep learning, data science in 2023: RTX 4090 vs. 3090 vs. RTX 3080 Ti vs A6000 vs A5000 vs A100 benchmarks (FP32, FP16) – Updated – | BIZON

RTX 3060 Ti is approximately x1.5 slower compared to RTX 2080 Super · Issue #46043 · tensorflow/tensorflow · GitHub

NVIDIA Introduces GeForce RTX 3060, Next Generation of the World's Most Popular GPU - Edge AI and Vision Alliance

Best GPU for AI/ML, deep learning, data science in 2023: RTX 4090 vs. 3090 vs. RTX 3080 Ti vs A6000 vs A5000 vs A100 benchmarks (FP32, FP16) – Updated – | BIZON